About Me

Hello there! I'm Kavi Gupta, a PhD candidate at MIT, studying neurosymbolic approaches to machine learning, with a focus on interpretability. I am advised by Prof. Armando Solar-Lezama. Contact me at kavig at mit dot edu.

Recent Publications

Sparling

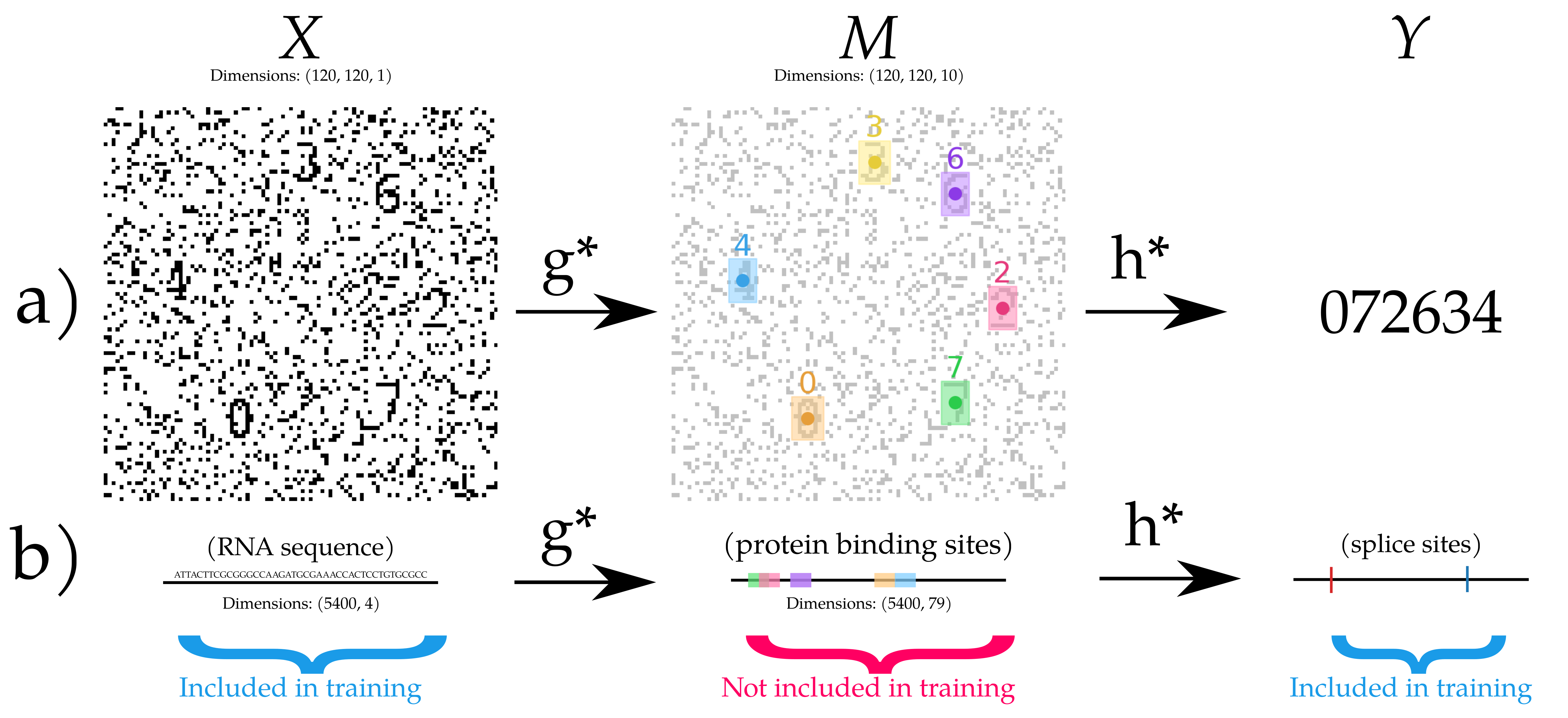

K. Gupta, O. Bastani, and A. Solar-Lezama. “Sparling: End-to-End Spatial Concept Learning via Extremely Sparse Activations.” The Fourteenth International Conference on Learning Representations (2026).

We prove it is possible to identify an extremely sparse intermediate latent variable with only end-to-end supervision, and introduce Sparling, an extreme activation sparsity layer and optimization algorithm that can learn such a latent variable

Sparse Adjusted Motifs

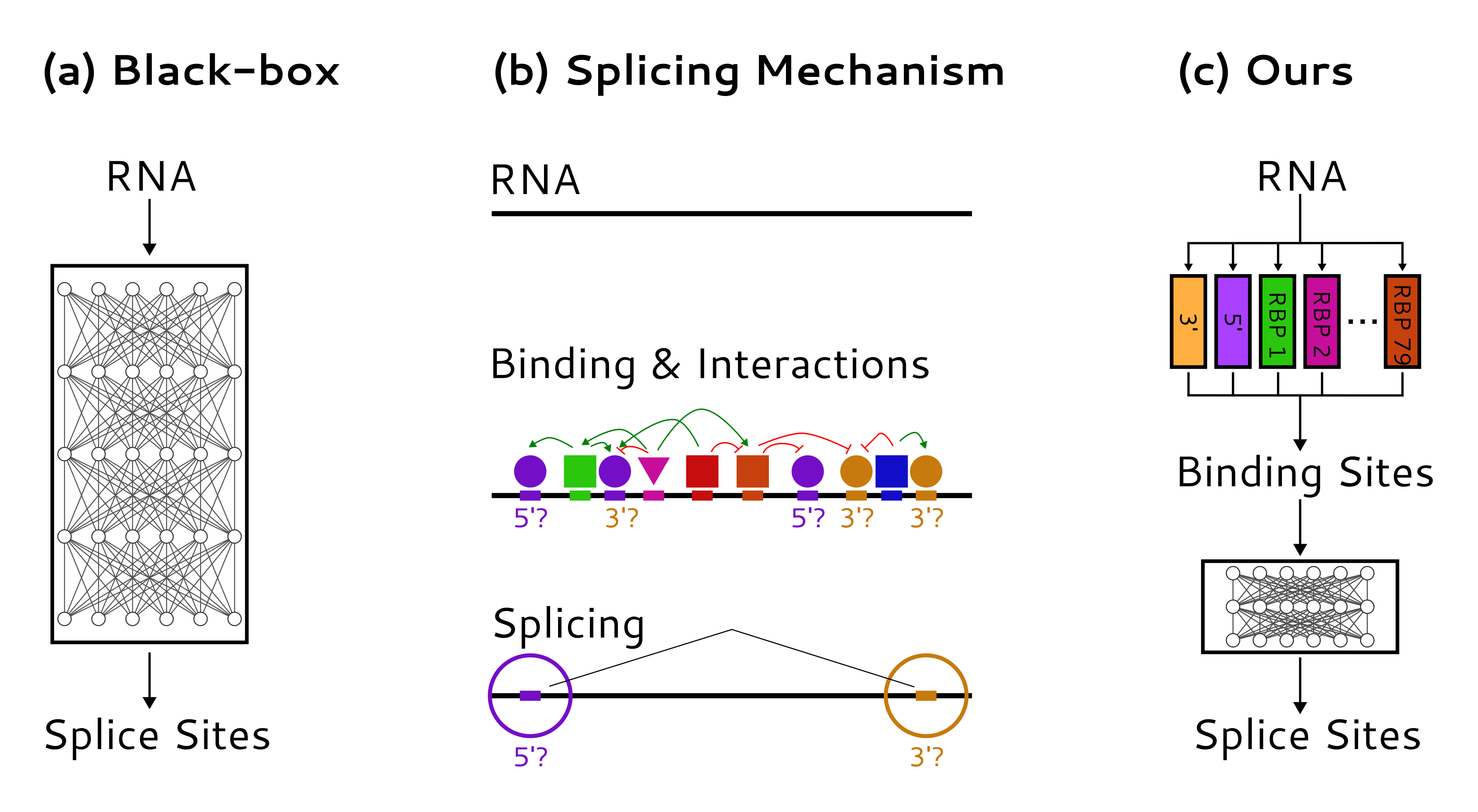

K. Gupta, C. Yang, K. McCue, O. Bastani, P. A. Sharp, C. B. Burge, and A. Solar-Lezama. “Improved modeling of RNA-binding protein motifs in an interpretable neural model of RNA splicing.” Genome Biology 25.1 (2024): 23.

We introduce a new model of RNA splicing refines predictions of rna-protein binding, a known intermediate step variable in the splicing process, by training on end-to-end splicing data. We validate that our model is superior to an unadjusted one in prediction of the intermediate as well as a causal counterfactual prediction task.

Links

Github: kavigupta

Google Scholar: Kavi Gupta

Personal Projects

Urban Stats: database of various statistics related to density, housing, race, transportation, elections, and climate change in the United States for a variety of regions; as well as density globally.

Visiting Every MBTA station: I visited every station! I’m also trying to 100% Cambridge on foot.